|

Fudan University Google Research

|

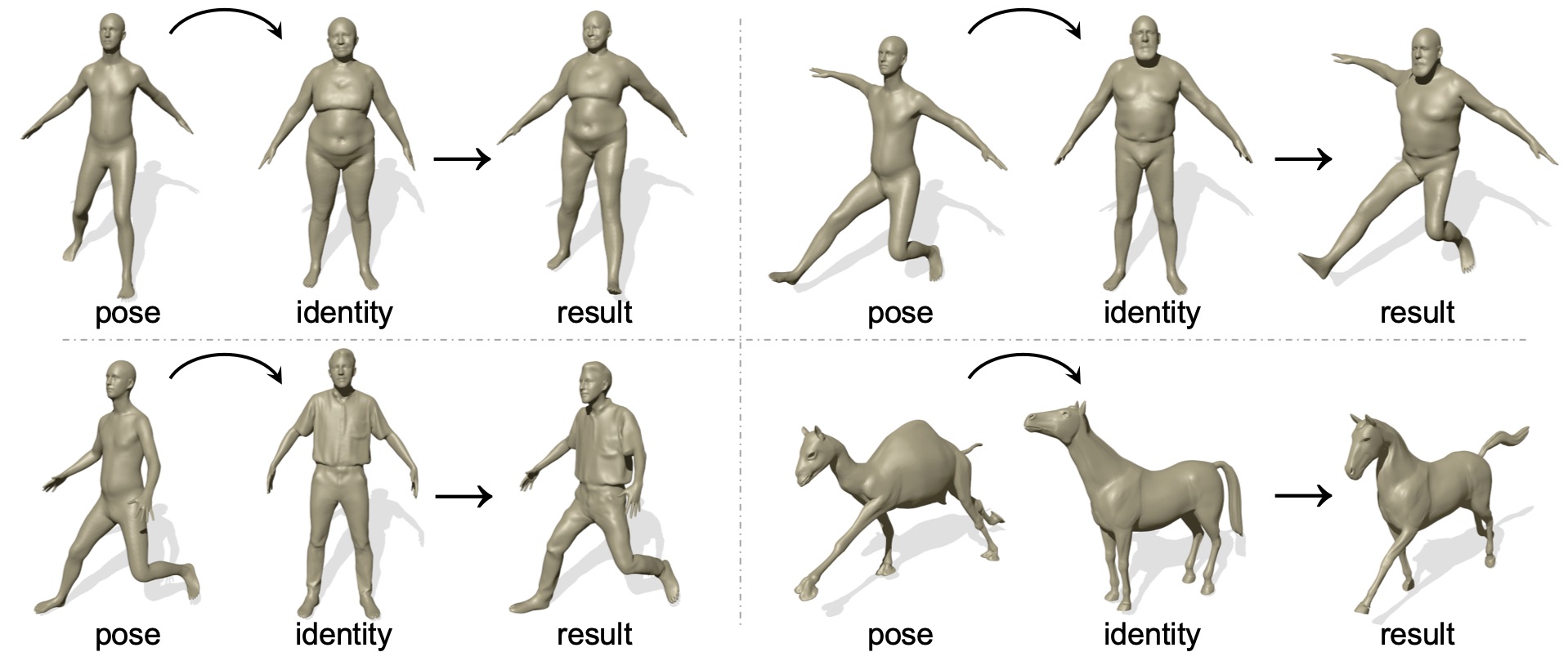

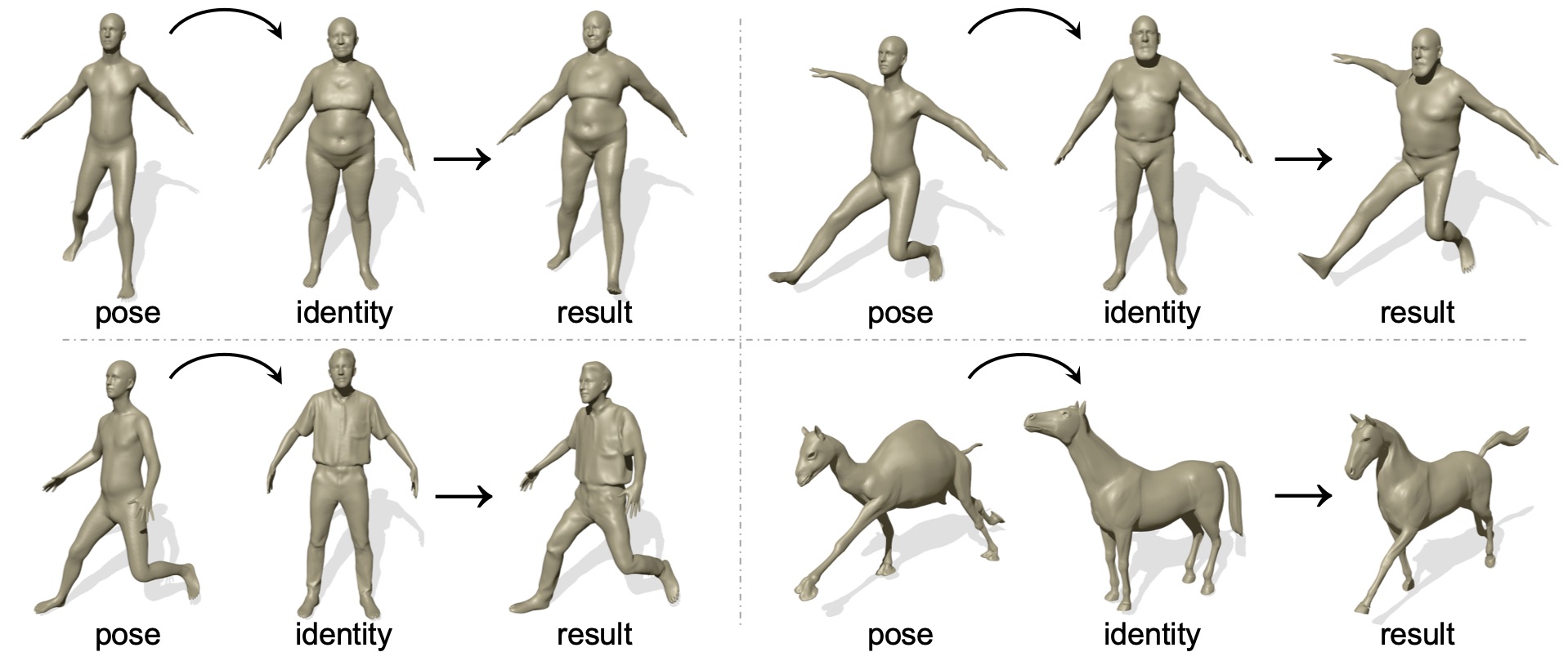

Pose transfer has been studied for decades, in which the pose of a source mesh is applied to a target mesh. Particularly in this paper, we are interested in transferring the pose of source human mesh to deform the target human mesh, while the source and target meshes may have different identity information. Traditional studies assume that the paired source and target meshes are existed with the point-wise correspondences of user annotated landmarks/mesh points, which requires heavy labelling efforts. On the other hand, the generalization ability of deep models is limited, when the source and target meshes have different identities. To break this limitation, we propose the first neural pose transfer model that solves the pose transfer via the latest technique for image style transfer, leveraging the newly proposed component -- spatially adaptive instance normalization. Our model does not require any correspondences between the source and target meshes. Extensive experiments show that the proposed model can effectively transfer deformation from source to target meshes, and has good generalization ability to deal with unseen identities or poses of meshes.

|

|

|

J. Wang, C. Wen, Y. Fu, H. Lin, T. Zou, X. Xue, Y. Zhang.C.

Neural Pose Transfer by Spatially Adaptive Instance Normalization. CVPR, 2020. [arXiv] [GitHub] |

Acknowledgements

This work was supported in part by NSFC Projects (U1611461) , Science

and Technology Commission of Shanghai Municipality Projects

(19511120700, 19ZR1471800), Shanghai Municipal Science and Technology

Major Project (2018SHZDZX01), and Shanghai Research and Innovation

Functional Program (17DZ2260900). The website is modified from this

template.

|